The open-source, privacy-first architecture positions the trio as an alternative to cloud-centric wearables,

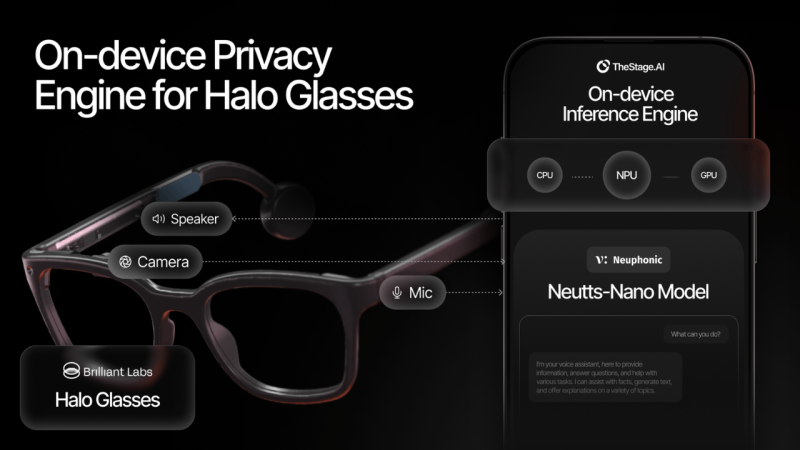

Startups Brilliant Labs, Neuphonic and TheStage AI today announced a strategic partnership to enable frontier AI in wearable technology without the latency and privacy compromises of cloud computing.

Currently, AI inference, such as audio or image analysis, relies on models hosted in the cloud, creating unnecessary latency and risking user data exposure. This partnership addresses the issue by moving processing directly into consumer hardware, protecting sensitive point-of-view data and enhancing performance with faster response times.

Brilliant Labs is gearing up for the launch of Halo, their latest smart glasses. In addition to on-device vision inference, Halo will use Neuphonic’s Conversational AI models on an inference engine built by TheStage AI.

This architecture challenges the foundation of cloud-dependent offerings from giants like Meta and Snap, placing user privacy and latency at the core of the user experience.

Brilliant Labs will release its groundbreaking AI memory feature through its open-source eyewear platform in one of the slimmest form factors available.

Neuphonic will deliver the conversational interface, where their ultra-low-latency text-to-speech technology runs locally, turning the device into a conversational partner with human-like responsiveness.

Finally, TheStage AI will provide an automated inference engine that optimises AI models to run efficiently on the edge, ensuring instant processing without draining the battery.

By combining these strengths, Brilliant Labs is delivering an unprecedented AI wearable experience to its customers where voice, vision, and sensor data is processed locally on the user’s device: no raw point-of-view data ever leaves the user’s phone or glasses. This architecture stands in stark contrast to cloud-dependent AI models that rely on remote inference for messaging, voice, and image analysis.

Recent investigations have raised questions about whether major platforms have renege on their privacy promises. Those concerns only grow more acute as AI systems expand beyond text into always-on microphones and cameras.

This partnership offers an alternative: intelligent wearables that keep sensitive visual and conversational data local, while delivering advanced AI through an open-source stack designed for transparency, trust, and independent scrutiny.

“We believe in a privacy-first future for personal computing. AI glasses are soon going to be everywhere around us: always-on cameras and microphones capturing our lives. That’s either exciting or terrifying, depending on where that data lives and who is monetising it,” said Bobak Tavangar, CEO of Brilliant Labs and former Apple program lead.

“I don’t want my children growing up in a world where that data is sold to the highest bidder. This partnership shows there’s a better way.

And by embracing open source, we want people to understand how these systems work, build upon them, and ultimately foster trust. That’s the standard we think the industry should be held to.”

“When you’re having a conversation, speed and privacy are everything. You cannot wait for the cloud to think,” said Sohaib Ahmad, CEO of Neuphonic.

“We provide the ‘voice’ of this new ecosystem. By running our advanced speech models directly on Brilliant’s hardware, we’ve unlocked a conversational experience that feels real, immediate, and completely private.” TheStage AI ensures this heavy lifting happens smoothly.

“Great hardware and great models need a bridge, and that is what we build,” said Kirill Solodskikh, CEO at TheStage AI.

“Running conversational AI on a pair of glasses is a massive computational challenge.

You have to manage peak memory, latency, and power consumption to make responses feel immediate.

Our core technology, ANNA, optimises Neuphonic’s models and supporting components, including transcription, wake word, and diarisation, so they run efficiently on a smartphone paired with the glasses. Deploying the optimised models on GPU and NPU accelerators delivers the best performance possible on-device.”

Looking forward, Brilliant’s Halo glasses will support:

- Context-aware, conversational AI that sees and hears in real time.

- Private memory that indexes what the user sees and hears for later recall and personalised context.

- Vibe Mode, a natural-language interface that generates custom AI mini-apps on demand — from on-demand AI agents to enterprise workflows.

All visual and audio inputs are processed on-device and converted into encrypted embeddings. Brilliant Labs’ Halo glasses with integrated Neuphonic voice AI will be available in Q1 2026 at $349.